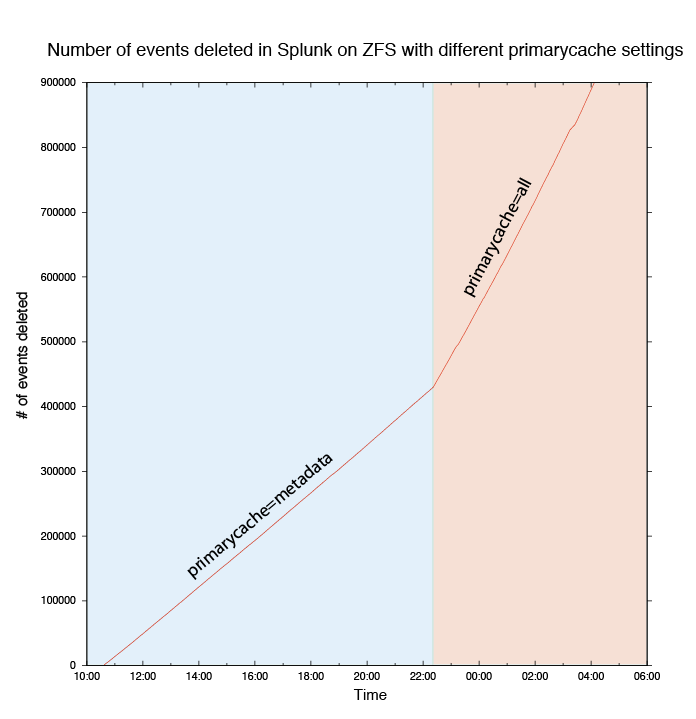

Last year I've written a post about ZFS primarycache setting, showing how it's not a good idea to mess with it. Here is a new example based on real world application.

Recently, my server crashed, and at launch-time Splunk decided it was a good idea to re-index a huge apache log file. Apart from exploding my daily index quota, this misbehavior filed the index with duplicated data. Getting rid of 1284408 events in Splunk can be a little bit resource-intensive. I won't detail the Splunk part of the operation: I've ended up having 1285 batches of delete commands that I've launched with a simple for/do/done bash loop. After a while, I noticed that the process was slow and was making lots of disk IOs. Annoying. So I checked:

# zfs get primarycache zdata/splunk NAME PROPERTY VALUE SOURCE zdata/splunk primarycache metadata local

Uncool. This setting was set locally so that my toy (Splunk) would not harvest all ARC from the server, hurting production. For efficiency's sake, I've switched back the primary cache to all:

# zfs set primarycache=all zdata/splunk

Effect was almost instantaneous: ARC filled with Splunk data and disk IOs plummeted.

| primarycache | # of deletes per second |

|---|---|

| metadata | 10.06 |

| all | 22.08 |

A x2.2 speedup on a very long operation (~20 hours here) is a very good argument in favor of primarycache=all for any ZFS user.